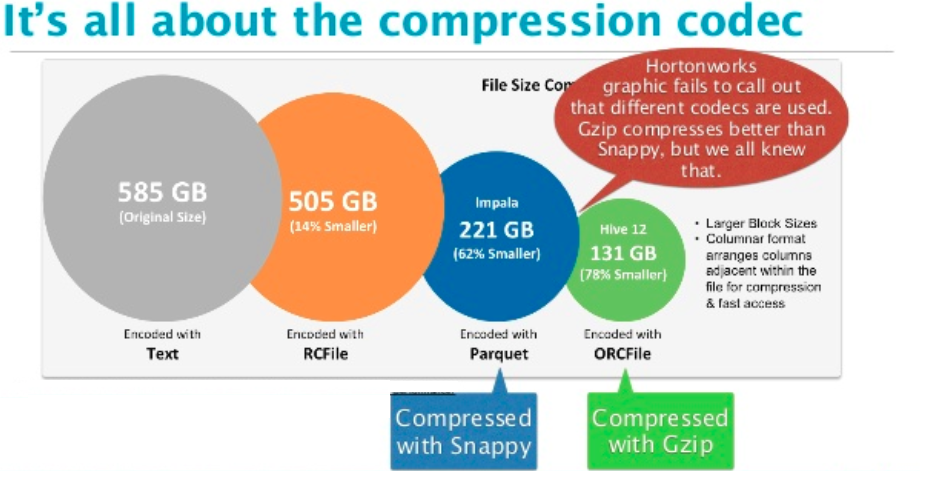

This article will prepare the tables needed for this follow up article and takes the opportunity to compare the compression algorithms in terms of storage spaces and generation time. The compression used for a given format greatly impact the query performances. In a follow up article, we will compare their performance according to multiple scenarios. We covered them in a precedent article presenting and comparing the most popular file formats in Big data. Each file format comes with its own advantages and disadvantages. Third party-provided bindings and ports include C#, Common Lisp, Crystal (programming language), Erlang, Go, Haskell, Lua, Java, Nim, Node.js, Perl, PHP, Python, R, Ruby, Rust, Smalltalk, and OpenCL.Choosing an appropriate file format is essential, whether your data transits on the wire or is stored at rest. Snappy distributions include C++ and C bindings. Unlike compression methods such as gzip and bzip2, there is no entropy encoding used to pack alphabet into the bit stream. More common compressors can compress this better. In this example, all common substrings with four or more characters were eliminated by the compression process. The first 2 bytes, ca02 are the length, as a little-endian varint (see Protocol Buffers for the varint specification). Wikipedia is a free, web-based, collaborative, multilingual encyclopedia project. The complete official description of the snappy format can be found in the google GitHub repository. The size of the dictionary was limited by the 1.0 Snappy compressor to 32,768 bytes, and updated to 65,536 in version 1.1. The length is the number of bytes to copy from the dictionary. The offset is the shift from the current position back to the already decompressed stream. The copy refers to the dictionary (just-decompressed data).

11 – Copy with length stored as 6 bits of tag byte and offset stored as four-byte little-endian integer after the tag byte.10 – Copy with length stored as 6 bits of tag byte and offset stored as two-byte integer after the tag byte.01 – Copy with length stored as 3 bits and offset stored as 11 bits one byte after tag byte is used for part of offset.Lengths larger than 60 are stored in a 1-4 byte integer indicated by a 6 bit length of 60 (1 byte) to 63 (4 bytes). 00 – Literal – uncompressed data upper 6 bits are used to store length (len-1) of data.The element type is encoded in the lower two bits of the first byte ( tag byte) of the element: The remaining bytes in the stream are encoded using one of four element types.

The lower seven bits of each byte are used for data and the high bit is a flag to indicate the end of the length field. The first bytes of the stream are the length of uncompressed data, stored as a little-endian varint, which allows for use of a variable-length code. The format uses no entropy encoder, like Huffman tree or arithmetic encoder. Snappy encoding is not bit-oriented, but byte-oriented (only whole bytes are emitted or consumed from a stream). Snappy does not use inline assembler (except some optimizations ) and is portable. Decompression is tested to detect any errors in the compressed stream. It can be used in open-source projects like MariaDB ColumnStore, Cassandra, Couchbase, Hadoop, LevelDB, MongoDB, RocksDB, Lucene, Spark, and InfluxDB. Snappy is widely used in Google projects like Bigtable, MapReduce and in compressing data for Google's internal RPC systems. The compression ratio is 20–100% lower than gzip.

Compression speed is 250 MB/s and decompression speed is 500 MB/s using a single core of a circa 2011 "Westmere" 2.26 GHz Core i7 processor running in 64-bit mode. It does not aim for maximum compression, or compatibility with any other compression library instead, it aims for very high speeds and reasonable compression. Snappy (previously known as Zippy) is a fast data compression and decompression library written in C++ by Google based on ideas from LZ77 and open-sourced in 2011.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed